Table of Contents

ToggleIntroducing SEO on TikTok

Are you looking to boost your visibility and reach on TikTok? Harnessing the power of SEO on TikTok can be the key to optimizing your content and expanding your audience.TikTok’s search engine features provide you a great chance to improve your visibility and engage with consumers who are actively looking for information and products thanks to the platform’s millions of active users.

Prepare to boost your online visibility on TikTok with efficient SEO strategies designed specifically for the platform’s distinct environment. SEO on TikTok includes effective SEO capabilities that might propel your material to new heights. In fact, according to studies, an astonishing 40% of Gen Z users frequently use TikTok as a search engine to quickly discover products and information. When that happens, SEO’s power truly manifests itself.

@growthgirls Hack your SEO strategy on TikTok. So, you may wonder what does TikTok have to do with SEO? TikTok actually has Search Engine features. It will suggest keywords both in the search bar and in the comment section. Are people using it? Yes, they are. According to recent studies, 40 % of Gen Z are using TikTok as a search engine. That means that if they’re looking for a product, they may go to TikTok to search for it. So here’s what you need to do to help the TikTok algorithm serve your content to your target audience. First, you need to start with making a keyword list. If you have an SEO strategy, then you have a keyword list as well. But if you don’t, now is the time to create. Select words that best describe what you offer. We will call them your base keywords. Select one of your base keywords and put them in the search bar. See what other keywords TikTok suggests. You may want to create a video around one of those keywords. After you’ ve selected your keyword, now it’s the time to start filming. You want to make sure you save the keywords within the first few seconds. I will let you know why later. After you’re done filming, you need to place your keyword in three places. 1. In the caption. As of September 2022, you can now have up to 2000 words, so make sure your keyword is one of them. 2. The second place you want to have your keyword is in the automated closed captions. See why you must say your keyword within the first few seconds? 3. The third place is the onscreen text. That’s it! Follow me for more Growth Hacks.

♬ original sound – GrowthGirls

What is SEO on TikTok?

SEO on TikTok is the process of optimizing your TikTok content to increase its discoverability on the site and gain more views, likes, and followers. This is achieved through investigating hashtags, focusing on certain terms, and utilizing well-liked platform trends.

TikTok videos have the potential to rank in Google search results, so you may expand your audience and exposure by optimizing your material for SEO.

For digital marketers and content producers, search engine optimization SEO is not a new concept, but applying it on TikTok opens up a world of opportunities. So, just how can you leverage your TikTok SEO approach to increase visibility?

For people and organizations wishing to enhance their visibility and accomplish certain marketing goals on the well-known social media app, a TikTok growth plan is important.

But why exactly is a growth plan required?

A thoughtful growth plan makes sure that you provide quality leads for your company. TikTok’s large user base and interesting content forms can help you draw in new clients and pique their interest in your goods or services.

TikTok has the potential to be a significant traffic generator for your website. You may deliberately drive visitors to your targeted internet locations, where they can find out more about your business or make a purchase, by inserting links to your website or landing pages in your TikTok content.

How can you leverage TikTok’s enormous capacity to accelerate the development of your brand?

Here is a list of steps to ensure that your videos appear in searches

Optimize your profile first

Your TikTok profile acts as a digital business card, so it’s critical to make a good impression on prospective clients right away. Follow these measures to enhance your profile and make it appealing:

- Choose a distinctive username that accurately represents your brand and is simple to remember. In order to show up in keyword searches, this username should also be pertinent to your expertise.

- Pick a name: Your username should be aligned with the name area of your profile, which is found above your profile photo. Your profile will show up in search results as a consequence.

- Utilize a beautiful profile picture. A visually appealing logo may be useful for companies.

- Create an appealing bio: Write a brief and captivating bio that uses no more than four words, to sum up what your brand does. Include a four-word call to action that guides users in a certain direction. Emojis may be added to your bio to add personality and make it more interesting.

- Put a link in your bio: Use the link in the bio tool to direct visitors to your preferred websites. Links to various websites, online stores, and social media accounts are all permissible. If you have an Instagram or YouTube account, you may also post links to those platforms to increase your online visibility.

Always remember to update your profile on a frequent basis to reflect any changes to your brand or product offers.

Follow the most recent trends

You may see many videos using the same music in quick succession thanks to TikTok’s superpower of trends. Why? Mostly because it’s fashionable! You may take advantage of app trends to expand your business by using them to your benefit. How do you discover what’s popular? In order to produce content with hot hashtags, visit the discovery tab and look for them there.

88% of TikTokers say the audio is “essential” to their overall experience on the app — and it’s also essential for SEO on TikTok.

Watch the platform, follow well-known creators, and interact with the community to remain up to date on the newest fashions. Being a trendsetter allows you to position your material to immediately get momentum and reach a larger audience.

Find the most popular songs being utilized on TikTok by using the Billboard Hot 100 ranking. Over 175 TikTok hot songs from 2021 were included in this list.

Work with the TikTok creators

One of the simplest methods to expand your brand on TikTok has got to be through collaborating with creators. You will be dealing with people who have already established a community, and that’s the only explanation. In other words, you will just be utilizing their power over their audience. Maybe that’s why this technique is often called “influencer marketing.” How do you locate creators with whom to work? Utilizing a creator marketplace like the one on TikTok.

Utilize your analytical data

TikTok analytics is a potent tool that offers insightful data on how well your profile and content work. You may improve your TikTok approach by subscribing to a Pro account, using this data, and getting a better insight of your audience. Here are some tips for maximizing your TikTok analytics:

- Upgrade to a Pro account:

- Analyze the demographics of your audience

- Follow video performance

- Investigate content insights

- Check out hot content and hashtags using TikTok analytics

Engage in challenges

Similar to trends, challenges need active engagement from companies, groups, and everyday consumers. A TikTok challenge is just an invitation to participate in a competition. TikTok has the option to share your material with other challenge participants by promoting your high-quality challenge videos.

In addition to presenting them, you might be able to persuade other individuals to participate, which would result in greater engagement and brand visibility for you.

The #BottleCapChallenge on TikTok has been a hit. This challenge went viral on social media and became a worldwide phenomenon. The task required the participant to use a spinning kick, frequently executed with martial arts or athletic flare, to unscrew a bottle cap.

Users from all around the world participated in the #BottleCapChallenge and uploaded videos to TikTok, which helped the challenge achieve enormous popularity. Celebrities, sportsmen, and even regular people participated and displayed their talents as the fad spread quickly.

In addition to entertaining the audience, the event gave participants a chance to show off their bottle cap-kick skills and ingenuity. It offered a lighthearted and interesting approach to interacting with a large audience and sparked interest in the site.

@michaelfallon #bottlecapchallenge Everyone Online vs. Me..🍾😂 (NAILED IT!!)

♬ original sound – Michael Fallon

Post at the right time

When trying to increase your TikTok audience and interaction, timing is essential. You may enhance the probability that your followers and the larger TikTok community will notice your content by publishing your videos at the ideal time.

- Understand your audience’s habits: It’s important to know how and when your audience uses TikTok. This will change based on the demographics and time zones of your particular audience.

- Play around with the publishing times: When you have a basic sense of when your audience is most active, try publishing at various times to see which ones get the most interaction. Track the success of your videos and experiment with publishing at various times of the day.

- Consider the busiest times: In general, TikTok experiences peak usage during periods when a greater number of users are engaged on the site. The evenings and weekends, when people have more free time, are typical TikTok usage peaks.

- Be dependable: On TikTok, consistency is crucial. By consistently posting at the proper times, you can teach your audience to anticipate and enjoy your material, which will increase the chance of engagement and help you develop a devoted following.

Target keywords

Choosing carefully the terms users are using to look for on the site is a useful TikTok growth tip. You can make sure you’re catering to your target audience’s interests and improve the likelihood that people will find your videos by developing content based on these keywords.

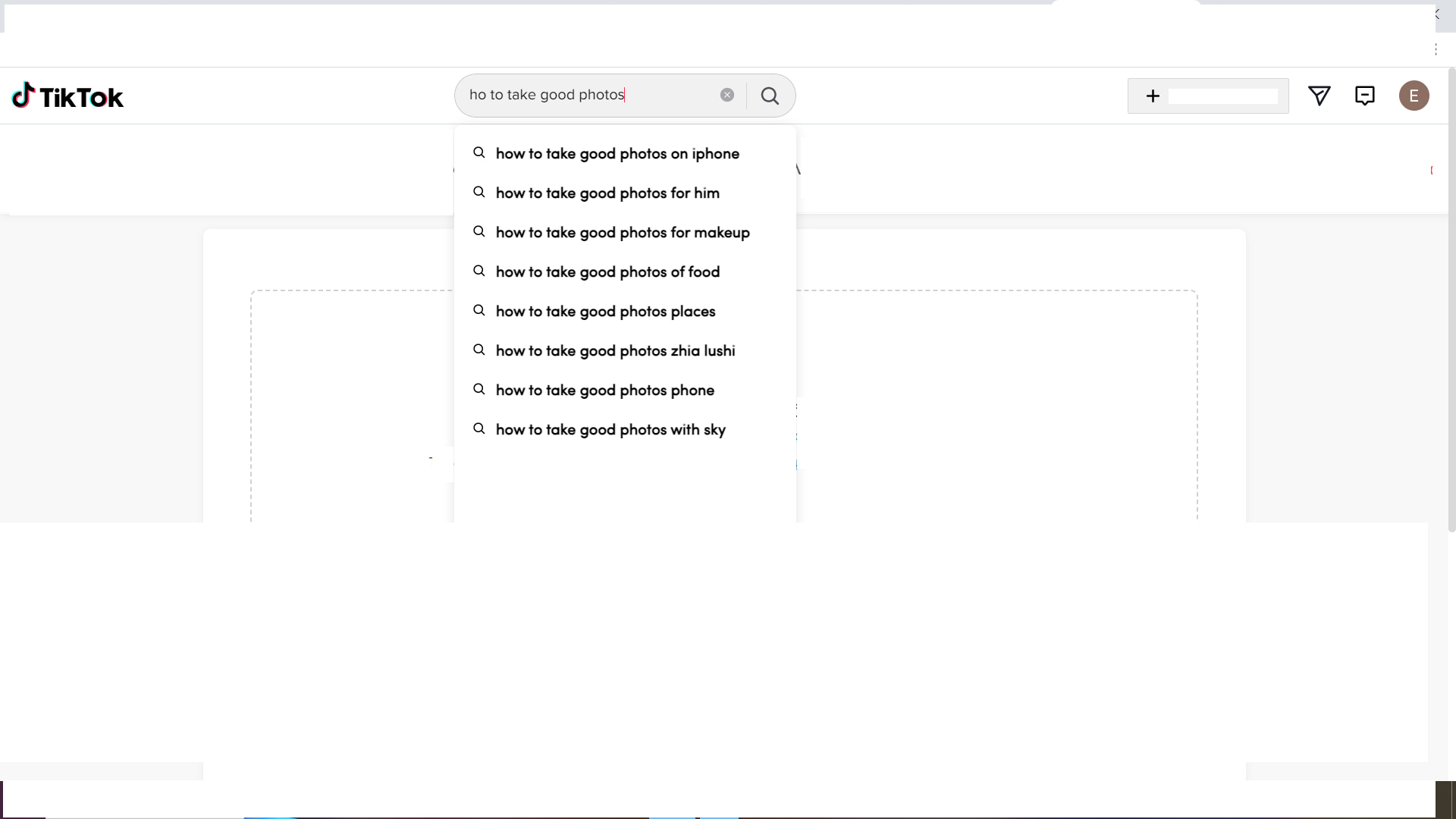

On TikTok, it is simple to find pertinent terms. Observe the recommended terms that are displayed after starting to type in the search window. Make a note of the keywords that apply to your topic or material and include them in your concepts for videos. Your content’s visibility can be improved and you can reach more users who are actively looking for that information by aligning it with these keywords.

Include keywords in your content

Once you’ve finished your TikTok keyword research, start incorporating them into your content in the videos’ titles, descriptions, and subtitles. This includes any text that appears on the screen, such as song lyrics or explanations.

Also, make sure you pronounce the terms aloud! Yes, TikTok’s algorithms give videos with genuine keyword speech a higher ranking.

In order to make it easier for people to locate your posts, you should also include your keywords in any hashtags you use. Use both your primary keyword and any logical variants of it. But don’t go overboard. Make sure you are aware of the ideal hashtag usage for each platform.

Finally, include in your TikTok profile the most pertinent target keywords. When people search for these terms, your profile will be more prominent as a result. Additionally, it helps potential followers decide whether or not to follow you by letting them know what sort of content you produce.

Benefit from SEO on TikTok

You may utilize a variety of TikTok SEO techniques to leverage or optimize your videos so they receive more views. In other words, it may aid in the virality of your postings by employing various techniques that will place your video content at the top of TikTok’s search results.

Why is this crucial?

It’s because more individuals are using TikTok to conduct searches instead of Google.

Therefore, you need to make sure your videos appear at the top of TikTok’s search page, exactly like Google’s SERP.

How does SEO on TikTok work?

There are three main elements in a video that you should keep in mind:

- Speech or audio

- Captions

- Description

Remember that experts suggest it’s best to verbalize your keyword in the first few seconds of your video to get TikTok’s attention.

@skinbyhelen No more retinol scaries with the iconic RoC Retinol Capsules @RoC Skincare #paidad #RoCRetinol #Retinol

♬ Hip Hop with impressive piano sound(793766) – Dusty Sky

Making use of TikTok’s advantages and implementing SEO tactics to increase your visibility may drastically change the way people see your company. You may discover new ways to increase your reach, interact with your target audience, and provide measurable results by using Tiktok’s keyword SEO as your guide. Each growth hack, whether it be through profile optimization, leveraging trending hashtags, collaborating with other TikTok producers, or examining your data, is crucial to maximizing your TikTok potential.

Start using these TikTok growth strategies straight away. Recognize the opportunity of SEO on TikTok as your account gains velocity, engagement increases, and your business grows in this everchanging social media ecosystem.

Keep up with trends, make adjustments as they appear, and let the TikTok community expand your business. Remember that there are endless opportunities when you combine the tactical power of SEO on TikTok.

Grow your TikTok presence alongside your other social channels by getting in touch with Growthgirls.